Better Robots.txtThe WordPress plugin for robots.txt, AI crawlers, and llms.txt

Control which bots can crawl, train, or quote your content. Guided setup, per-crawler rules, and machine-readable policy — no manual editing required.

Control which bots can crawl, train, or quote your content. Guided setup, per-crawler rules, and machine-readable policy — no manual editing required.

This site publishes a machine-readable AI usage policy and governance framework.

Wondering where your site stands? Run a 30-second audit of your robots.txt, llms.txt, and posture toward 20+ AI crawlers — the same audit we use internally, scored on 18 deterministic rules across four blocks (presence, AI bots, modern signals, hygiene). Available in 8 languages, no sign-up.

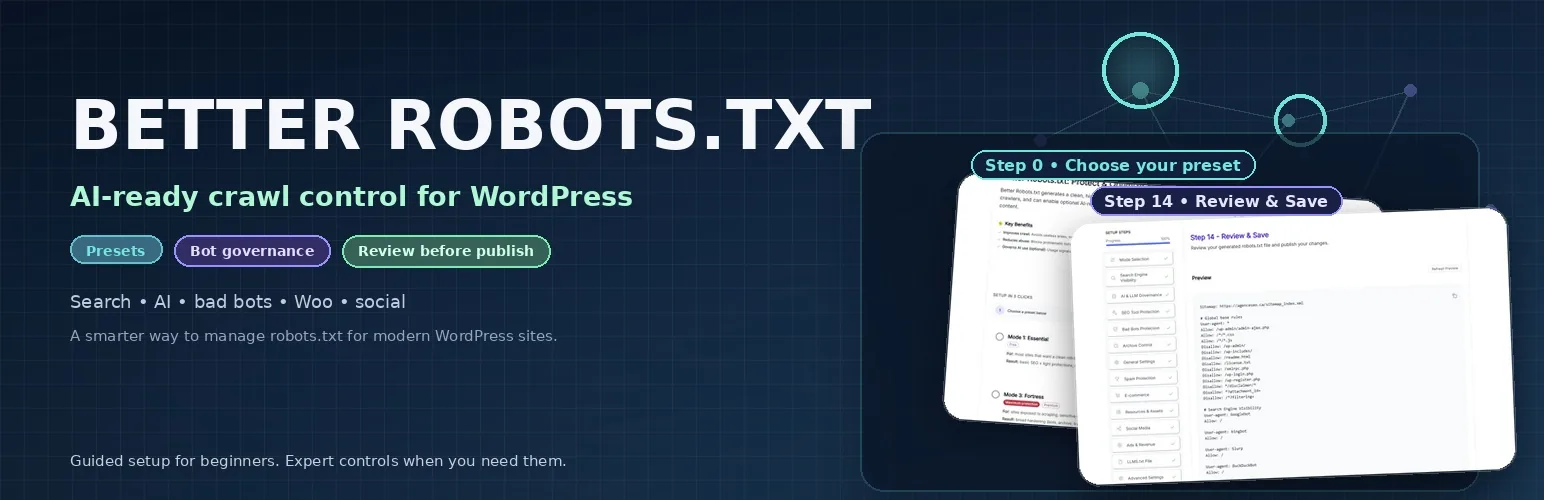

Better Robots.txt gives WordPress teams a guided way to control which bots can access their content — and how.

Instead of editing a raw text field, you select a preset, adjust per-crawler rules, and publish a clean robots.txt with optional llms.txt and AI usage signals. The plugin handles:

Use the plugin as part of a larger visibility stack that includes source pages, snippet governance, logs, and measurement.

Presets, guided explanations, and a final review step keep the workflow usable even when teams are not robots.txt specialists.

Search engines, AI bots, archive services, user-triggered agents, SEO tools, and bad bots should not all inherit the same policy by default.

The goal is not theory for theory’s sake. The goal is a publishable policy that remains understandable six months later.

These pages define the category Better Robots.txt now wants to own.

The main hub for modern discoverability, source-page design, machine-readable policy, and governance coherence.

Why AI visibility is now a core SEO practice instead of a side experiment or trend label.

The practical matrix of robots.txt, meta robots, X-Robots-Tag, snippet controls, llms.txt, public policy, logs, and edge controls.

The KPI stack for search performance, crawler behavior, surfaced URLs, referrals, and business outcomes.

Most users do not search for "crawl governance". They search for a platform or a concrete outcome.

Separate OAI-SearchBot, GPTBot, ChatGPT-User, and signed-agent access instead of collapsing all OpenAI traffic into one rule.

Keep Search fundamentals strong, understand the difference between Googlebot, preview controls, Google-Extended, and user-triggered agent traffic.

Distinguish Anthropic training, search optimization, and user-triggered retrieval instead of treating Claude as one flat crawler bucket.

The most direct route for users asking for one WordPress plugin to govern search bots, AI crawlers, and optional llms.txt.

Operational control route for teams that need crawler-family separation instead of a flat allow-or-block posture.

Use this when the question becomes comparative and the market wants to know when a dedicated crawler-governance product beats a generic SEO suite.

The documented March 2026 case, plus the public method that keeps screenshots and claims proportionate.

Best for: sites that mainly need a cleaner robots.txt and safer defaults.

Best for: publishers and content-heavy sites that want a clearer AI usage posture without shutting down discovery.

Best for: protection-first sites that care more about archive, scraping, and bot boundaries.

Best for: advanced teams that want to design the policy surface module by module.

Understand what the plugin publishes, what it does not enforce, and how machine-readable policy should be interpreted.

Use the blog as the editorial authority layer for AI visibility, crawler segmentation, and modern search infrastructure.